Deep Speaker Feature Learning

Project name

Deep Speaker Feature Learning

Project members

Dong Wang, Lantian Li, Zhiyuan Tang

Introduction

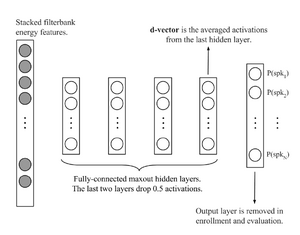

The key idea of speaker feature learning is simply based on the idea of discriminating training speakers based on short-time frames by deep neural networks (DNN), date back to 2014 by Ehsan et al.[2]. As shown below, the output of the DNN involves the training speakers, and the frame-level speaker features are read from the last hidden layer. The basic assumption here is: if the output of the last hidden layer can be used as the input feature of the last hidden layer (a software regression classifier), these features should be speaker discriminative.

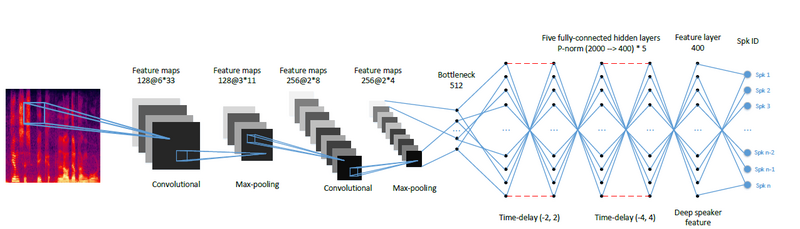

However, the vanilla structure of Ehsan et al. performs rather poor compared to the i-vector counterpart. One reason is that the simple back-end scoring is based on average to derive the utterance-based representations (called d-vectors) , but another reason is the vanilla DNN structure that does not consider much of the context and pattern learning. We therefore proposed a CT-DNN model that can learn stronger speaker features. The structure is shown below[1]:

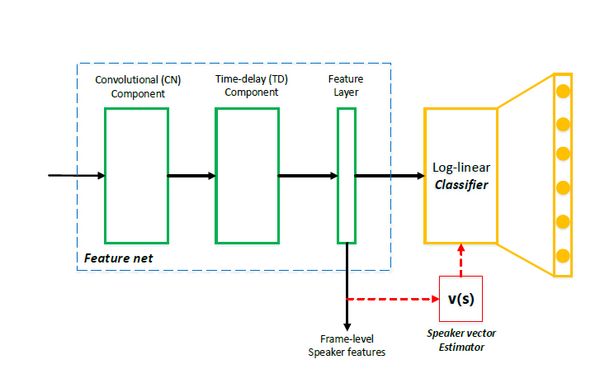

Recently, we found that an 'all-info' training is effective for learning features. Looking back to DNN and CT-DNN, although the features read from last hidden layer are discriminative, but not 'all discriminative', because some discriminant info can be also impelemented in the last affine layer. A better strategy is let the feature generation net (feature net) learns all the things of discrimination. To achieve this, we discarded the parametric classifier (the last affine layer) and use the simple cosine distance to conduct the classification. An iterative training scheme can be used to implement this idea, that is, after each epoch, averaging the speaker features to derive speaker vectors, and then use the speaker vectors to replace the last hidden layer. The training will be then taken as usual. The new structure is as follows[4]:

Research directions

- Adversarial factor learning

- Phone-aware multiple d-vector back-end for speaker recognition

- TTS adaptation based on speaker factors

Reference

[1] Lantian Li, Yixiang Chen, Ying Shi, Zhiyuan Tang, and Dong Wang, “Deep speaker feature learning for text-independent speaker verification,”, Interspeech 2017.

[2] Ehsan Variani, Xin Lei, Erik McDermott, Ignacio Lopez Moreno, and Javier Gonzalez-Dominguez, “Deep neural networks for small footprint text-dependent speaker verification,”, ICASSP 2014.

[3] Lantian Li, Dong Wang, Yixiang Chen, Ying Shing, Zhiyuan Tang, http://wangd.cslt.org/public/pdf/spkfact.pdf

[4] Lantian Li, Zhiyuan Tang, Dong Wang, FULL-INFO TRAINING FOR DEEP SPEAKER FEATURE LEARNING, http://wangd.cslt.org/public/pdf/mlspk.pdf

[5] Zhiyuan Thang, Lantian Li, Dong Wang, Ravi Vipperla "Collaborative Joint Training with Multi-task Recurrent Model for Speech and Speaker Recognition", IEEE Trans. on Audio, Speech and Language Processing, vol. 25, no.3, March 2017.

[6] Dong Wang,Lantian Li,Ying Shi,Yixiang Chen,Zhiyuan Tang., "Deep Factorization for Speech Signal", https://arxiv.org/abs/1706.01777